The generative AI adoption spectrum

Why generative AI needs engineers and architects more than ever before

👋 Hi my name is Christian.

I am working as an AWS Solution Architect at DFL Digital Sports GmbH. Based in cologne with my beloved wife and two kids. I am interested in all things around ☁️ (cloud), 👨💻 (tech) and 🧠 (AI/ML).

With 10+ years of experience in several roles, I have a lot to talk about and love to share my experiences. I worked as a software developer in several companies in the media and entertainment business, as well as a solution engineer in a consulting company.

I love those challenges to provide high scalable systems for millions of users. And I love to collaborate with lots of people to design systems in front of a whiteboard.

I use AWS since 2013 where we built a voting system for a big live TV show in germany. Since then I became a big fan on cloud, AWS and domain driven design.

Generative AI dominates not only my social media feeds but also daily talks and discussions. Working in the industry of media & entertainment, this is not only here a huge topic. Besides my excitement about this new technology, I recognized an interesting change in feelings when I think about all things generative AI - and to be honest, I never felt this before. A good time for self-reflection and writing down my thoughts to share but also for self-therapy.

Let me directly conclude: It is the first time in my tech career, that I felt a certain fear of technology.

Writing down my thoughts helped me to look at the matter objectively.

A natural fear of becoming irrelevant

Understanding and reading about how the technology around generative AI will change the way how we deal with technology scares me of becoming irrelevant. It will change the way how we build applications and solutions. It will change the way how people use the software. And that increases in me the fear of not being able to keep up or becoming irrelevant.

A few words about me. I am 39 years old and started my tech career early, resulting in almost 20 years of experience in software development and IT. Now working as an AWS Solutions Architect at DFL Digital Sports in Germany. I have gone through some transformations from Web 1.0 to Web 2.0. From on-premise to virtualization to cloud. Although these transitions also had a huge impact, I never faced these changes with uncertainty, fear, or insecurity.

I had to remember my first instructor during my apprenticeship as an IT professional. He had a hard time adapting and understanding object-oriented programming. For me, this was bread and butter as I learned it from scratch in school. For him - having a mainframe and sequential programming background - this felt like a whole new world that was not as easily accessible as it was for me. His natural reaction was fear of becoming irrelevant.

Business value is not a perpetual motion

In my business context, a lot of people talking about generative AI and using this easily accessible technology to show some cool demos or proof of concepts. People without any kind of technical background. But isn't this good? In some kind, yes. But what scares me, are fictitious discussions like this:

Does our astronaut know what he is talking about? A valid question to be asked by the architect would also be: "What holds you back to proceed?" I bet there are some things to mention. The most obvious: the astronaut needs help to integrate this new capability into the existing value chain. A typical task in which engineers and architects are involved. Business value is not created by just breathing air or by just introducing a new tool. Creating business value requires the interconnection of people, processes, AND technology.

The not-so-obvious fact is all things around data privacy and value propositions. I mean if a business decides to use a GenAI tool of-the-shelf: what happens with my business data? What data do I have to share? How sensitive or worth protecting are these data? What happens with my data? And what makes my tenant better than the tenant of our competitors?

How can architects help? Exactly with this!

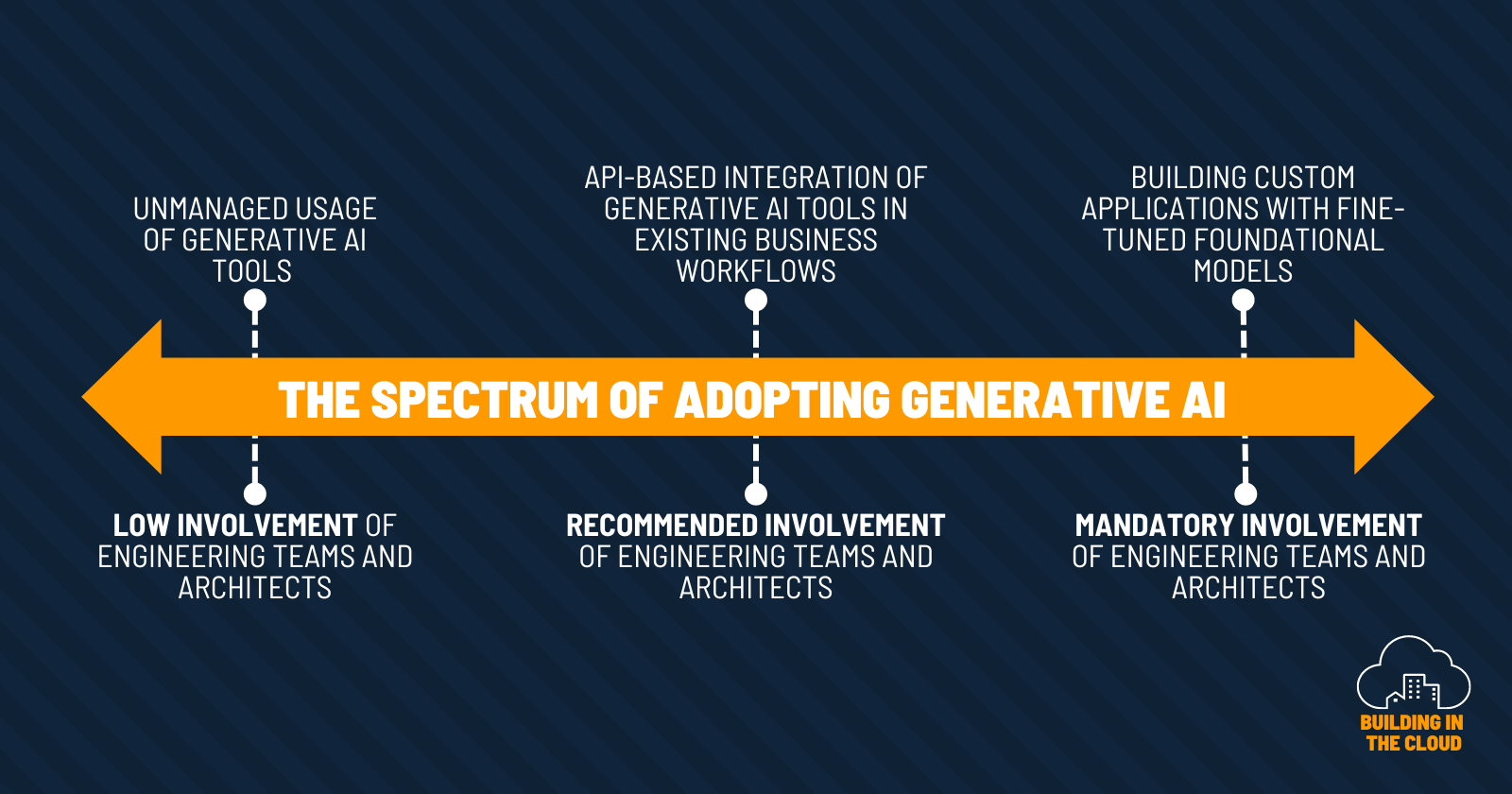

The spectrum of adopting generative AI

Adopting generative AI has a broad spectrum. From just using ChatGPT somewhere in your company to integrating GenAI tools via API in your existing workflows up to building your own capabilities by fine-tuning foundational models. Depending on the adoption level, the involvement of professional engineers and roles is recommended or mandatory.

Professional software engineering is so much more than just coding! And it is just one part of the value chain of your business.

We discussed this topic in the AWS Community Builders program. With great feedback from several builders and subject matter experts. That was helpful to get a broad range of perspectives and highlighted the importance of thinking about the responsible use of AI.

The value of architects

Architects are needed in discussions like this to help people and businesses in creating real, measurable, and sustainable business value in the end. Architects sell options. And this becomes especially relevant as new technology arises. Hence our contributions might be more important in the future than ever before.

And this gives me the feeling of still being needed in the future.

The more I think about this and reflect on my words, the more I conclude that our domain and business of IT was dominated by the means of being untouchable. We only heard about „IT and software eating the world/replacing jobs“ because we created this software. Now something like generative AI has the potential to destroy this worldview of „being untouchable“. Software eats software. In a way. Fear seems to be a natural reaction. But we architects have the power and skills, to see things from multiple perspectives.

But what if generative AI or LLMs have the power to replace certain tasks of architects or engineers? Coming back to the story of my first instructor during my apprenticeship. What would I recommend to him with the experience of today? Not much but:

There is a chance of shifting perspectives!

And maybe this is exactly the situation we are facing right now. Maybe it is time to shift perspectives. Engineers and architects that might fear being replaced can change the spectrum of responsibilities. For example by leaving the engine room to some degree and entering the level of understanding better the business domain they are in. Extending their skillset beyond just coding to build even better solutions in the future.

Shifting perspectives can also mean, that we all have to learn what this new technology means for us. Simple questions like this one from AWS serverless Hero Yan Cui indicate, that we all are at the very early stages of this emerging tech.

My take on this: I would consider them to be code. I define code as something that provides business value. And so yes: prompts (and their quality) do the same and hence I would put them in the same category.

Means we could also talk about how to test prompts?! What does CI/CD or TDD look like for prompts? Will some tools do the job for me? What does this mean for observability? And dear astronaut (see chat before), let's meet for a coffee, and let us talk about vendor lock-ins.

Conclusion

The adoption of generative AI requires both skilled architects and engineers. Businesses need roles and people with skills that can connect the dots and build bridges between business and IT. Maybe this is more important than ever.

Certain tasks of engineers might be replaced by AI. What can't be replaced is the knowledge about the business and how to translate a business strategy into running software. Independent if we write the code on our own or writing prompts in the future.

The output of LLMs is not wisdom. It is just a statistically calculated sequence of words given a concrete context. LLMs know how language work. But they don't know how to run your current business or how to create your next business strategy. And they also do not know about your individual technological, organizational, or environmental constraints.

But your colleagues do. Hence replacing this intellectual property is a risk that you should manage actively.